Platform Framework

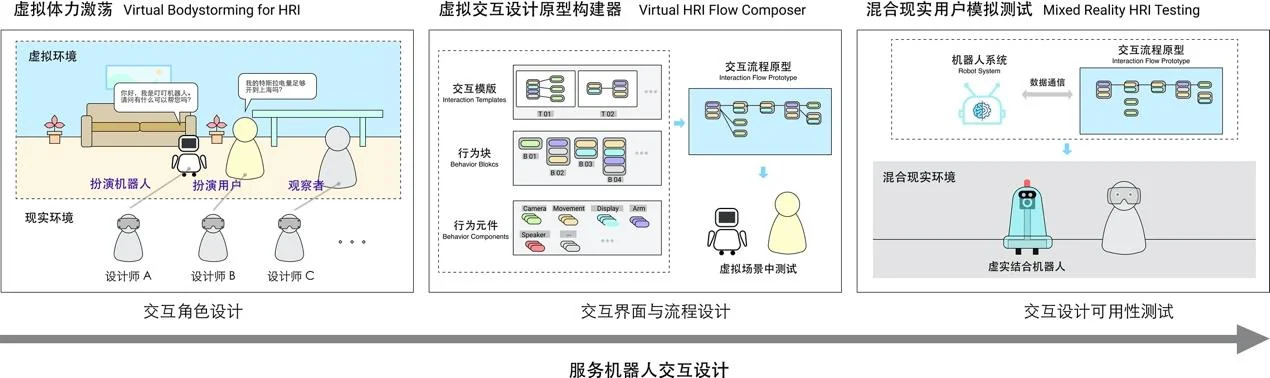

Service robot interaction design lacks mature design methods and tools. Existing approaches such as paper prototyping and Wizard of Oz are limited in their ability to simulate the multimodal, spatial, and embodied nature of robot interactions. The platform addresses this by proposing a simulation design platform built on virtual simulation technologies, covering the full design cycle through three integrated modules:

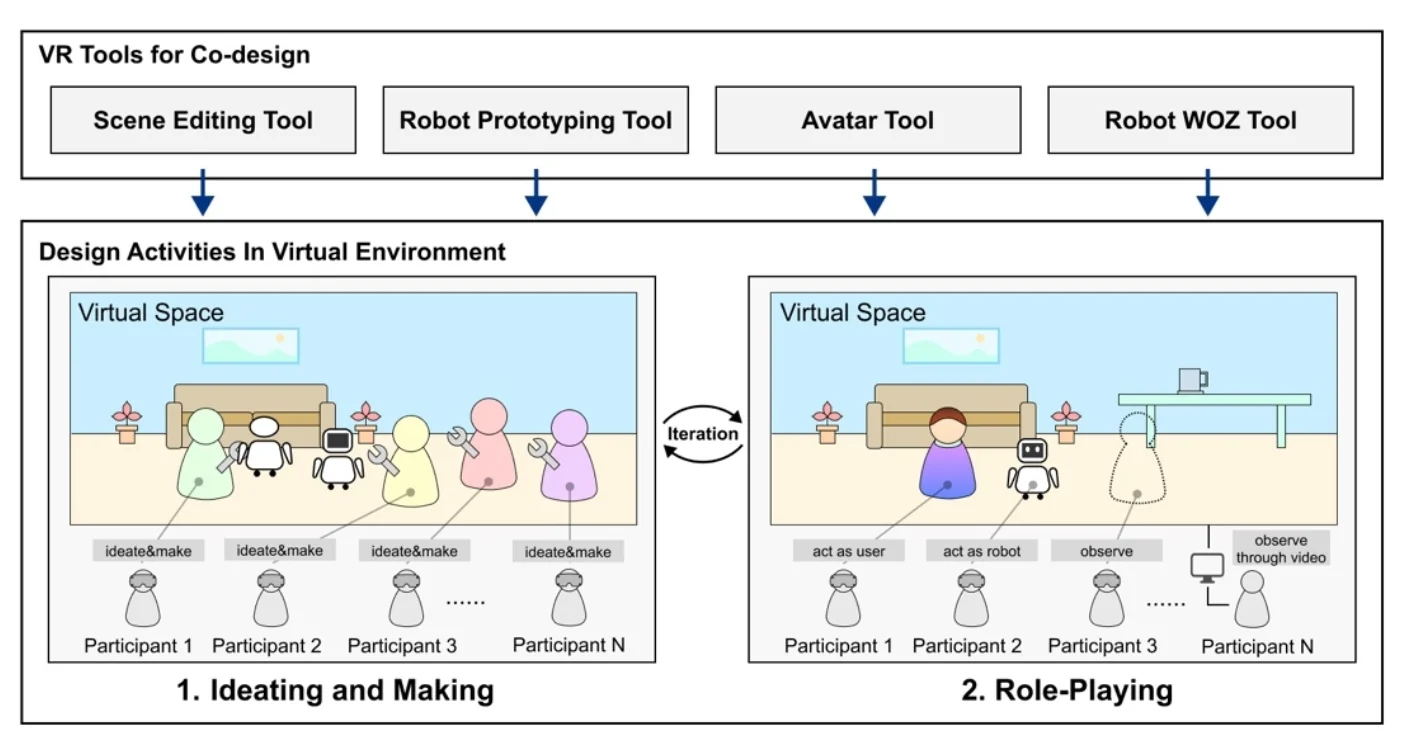

- Virtual Bodystorming supports the conceptual character design phase. Designers collaboratively build robot application scenes and robot prototypes in a shared VR environment, then perform role-play (both robot and user) to explore and validate design concepts.

- Virtual HRI Flow Composer supports the interface and flow design phase. Designers build interaction flow prototypes using a component-based approach with three levels of abstraction: interaction templates, behavior blocks, and behavior components.

- Mixed Reality HRI Testing supports the usability testing phase. By combining virtual content with physical robot hardware through mixed reality, designers can conduct low-cost user testing in real-world environments without building full engineering prototypes.

Virtual Bodystorming

In the VR environment, multiple designers enter a shared virtual space to co-design robot service scenarios. The process unfolds in two stages: first, scene construction — designers select preset environments, add props, and configure virtual human agents to build the application context; second, role-play — designers perform robot role-play via Wizard of Oz controls (movement, head rotation, voice, display) and user role-play through embodied interaction, to evaluate service and social character design concepts.

The platform supports modular robot prototype building: robot morphology is decomposed into head, body, arms, and base modules with configurable attachments, enabling rapid assembly and iteration of robot form factors directly in VR.

Interaction Flow Composer

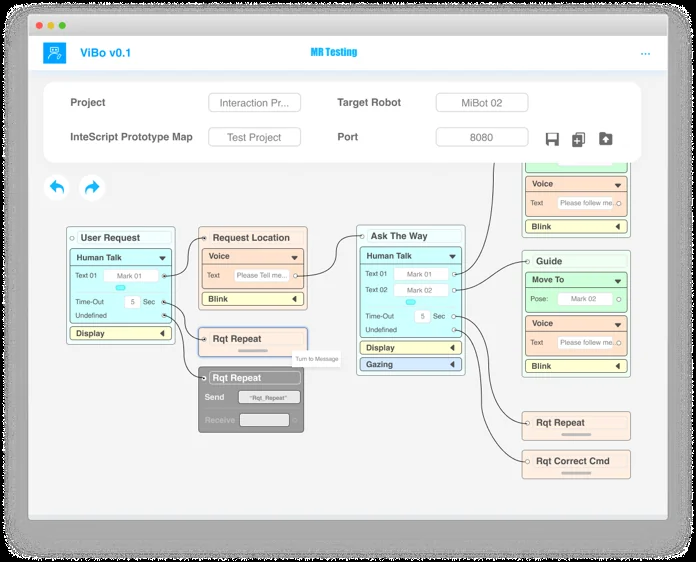

The Interaction Composer enables designers to build robot interaction flow prototypes through a visual, component-based tool without programming. Three levels of building blocks are provided: behavior components (atomic interaction units such as voice output, movement, and screen display), behavior blocks (combinations of components representing a complete interaction step), and interaction templates (design patterns such as question-answer, command-action, and greeting-response).

Designers drag components onto a canvas, assemble behavior blocks, connect them into interaction flows, and test the prototype directly in the virtual environment.

System Implementation

The platform is built on Unity, with the host application managing assets (3D environments, props, robot models), project configuration, and interaction prototype authoring. The VR application runs on Oculus Rift headsets with OptiTrack optical tracking for multi-user body tracking and avatar synchronization. The MR application uses HoloLens to overlay virtual robot interaction prototypes onto physical robot hardware via IoT data synchronization.