System Overview

Thematic joke writing is inherently context-driven: creators hunt for timely stories, memes, and references, frame them into angles that can support a setup, and then weave a concise setup-punchline structure. Although LLMs can generate jokes conversationally, ordinary chat interfaces seldom give creators enough agency, control, or timely access to such source material. Jokeasy addresses this gap by coupling a search-enabled LLM agent with a structured visual canvas.

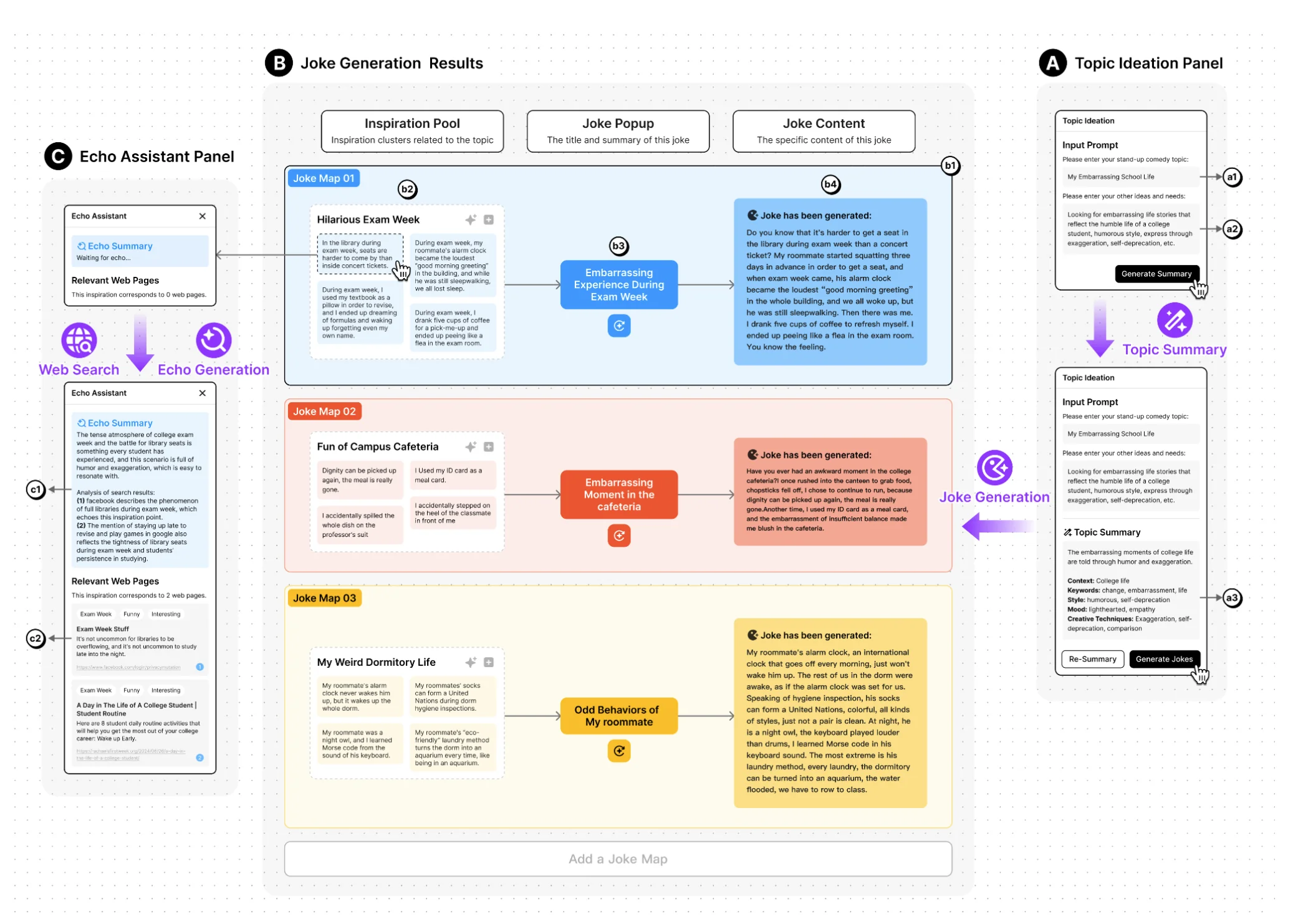

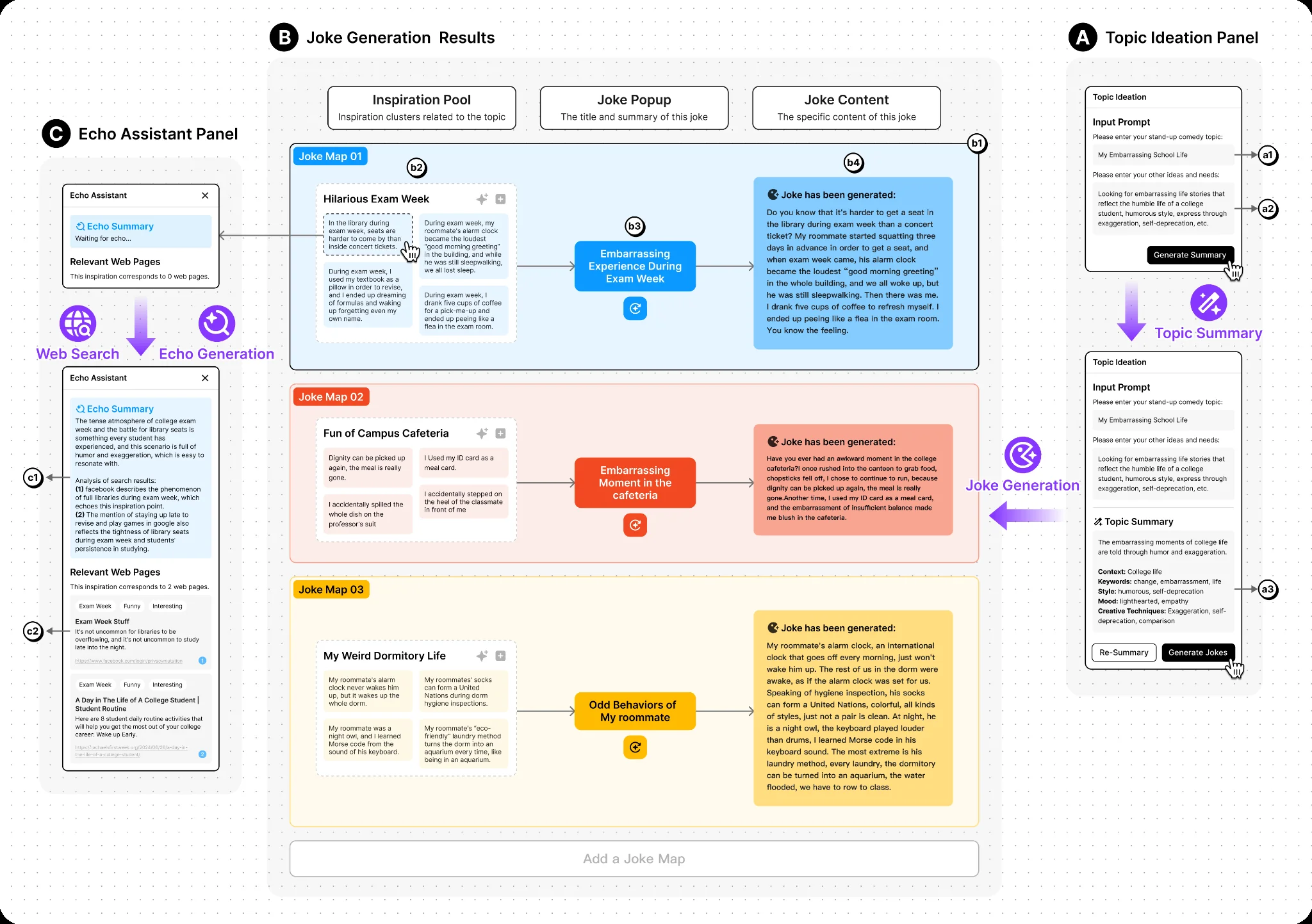

The system is built around three interrelated design considerations: (DC1) a dual-role LLM agent that acts as both a material scout and a prototype writer; (DC2) a multistage collaboration workflow built on editable inspiration blocks derived from search results; and (DC3) a visual, object-based canvas that externalizes the conversation into tangible, manipulable elements.

Multistage Workflow

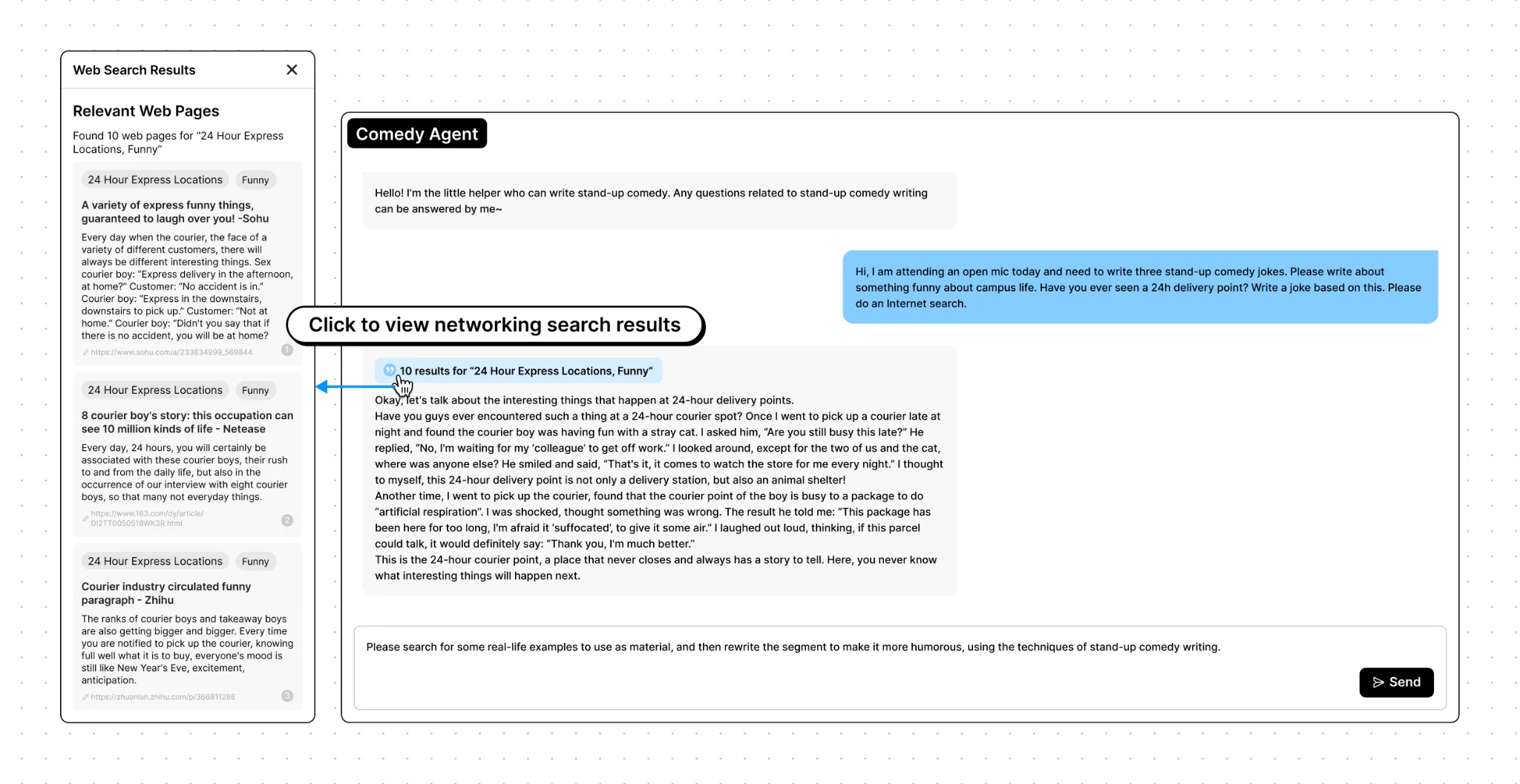

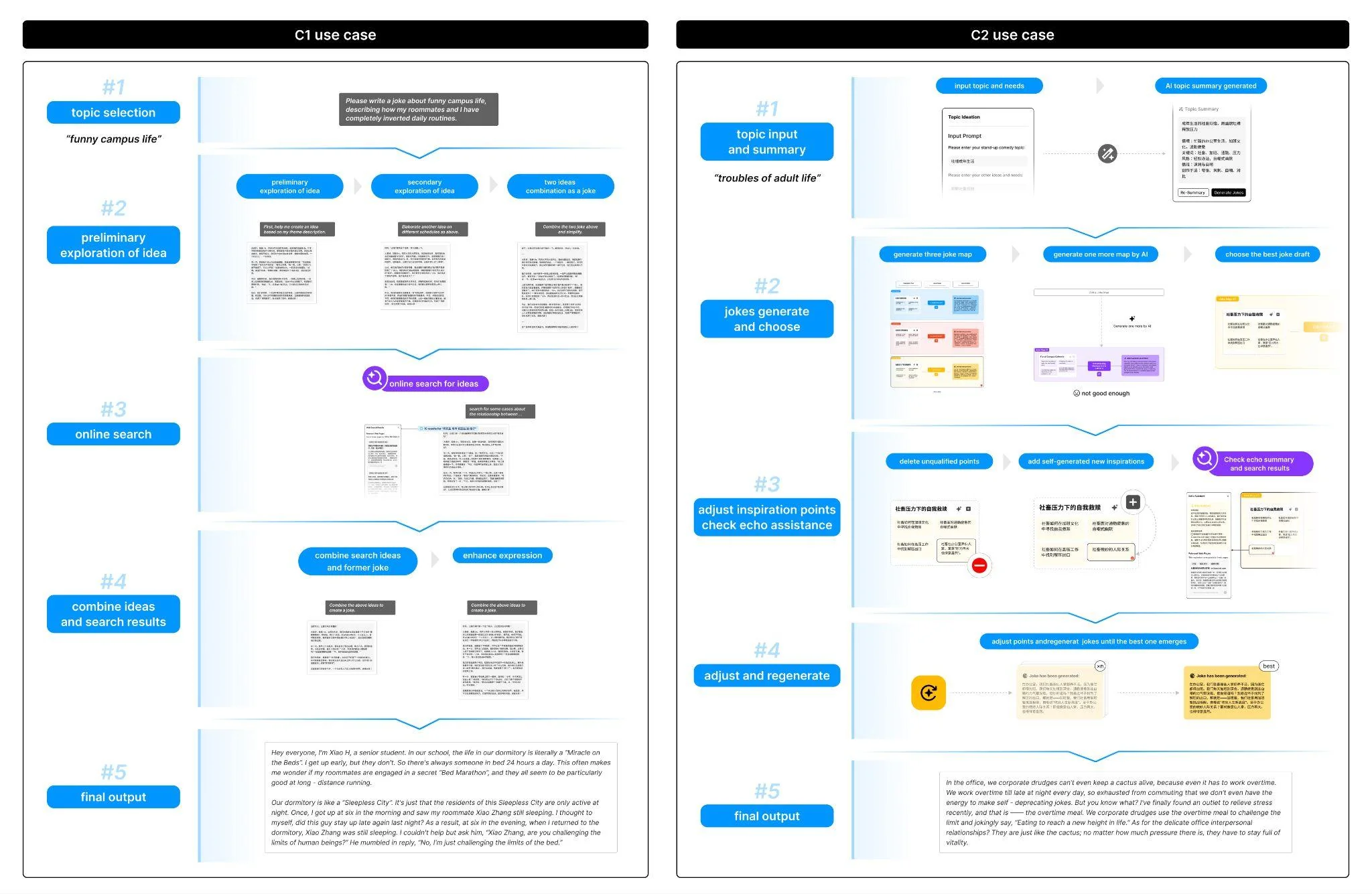

The joke creation process unfolds in four sequential phases. In Topic Ideation, the user enters a creation topic along with preferred styles and comedic techniques; Jokeasy generates a structured topic summary for review. During Inspiration Generation and Initial Prototype Creation, the LLM agent derives three inspiration themes, expands each into search queries, retrieves web content via the Tavily API, and distills the results into concise inspiration blocks that populate an inspiration pool. Each pool is merged with the topic summary to produce a provisional joke title and prototype joke content, together forming a joke map. Three such maps are presented side by side on the canvas.

In the Inspiration Validation and Collaborative Refinement phase, the writer inspects and refines each joke map. Selecting any inspiration block opens the Echo Assistant, which displays the retrieved source material and an echo summary explaining its relevance. Writers can modify, add, or remove blocks; every change triggers the system to re-run the search and regenerate the echo summary. Finally, in Joke Synthesis, after iterative refinement the system produces the final draft of each thematic joke.

System Architecture

The front-end of Jokeasy is developed as a Figma widget plugin; the back-end is built using Node.js with moonshot-v1-auto 3 as the LLM backbone. The system implements core functions using an LLM-chain method with structured output. The prompt preamble is organized into six key fields: [Role], [Input Context], [Overall Rules], [Output Formatting], [Workflow], and [Example]. The search functionality is implemented using the Tavily API.

User Study

We recruited 18 participants: 13 hobbyists and 5 expert users (including professional comedians with over five years of stand-up experience, an HCI specialist, and an AI researcher). Participants interacted with both Jokeasy and a conversational baseline system in counter-balanced order, each completing a stand-up comedy joke creation task. Sessions lasted approximately one hour, combining think-aloud protocols with semi-structured interviews.

Most participants (13/18) favoured Jokeasy over the baseline. They described its four-stage workflow as “organised and sequential from inspiration to the final product” and felt it “integrated several small functions involved in joke writing.” Fourteen participants praised the canvas for its structured clarity, comparing it to a mind map. Integrated search helped users “continuously spark new ideas” and “trace back why the AI generated this inspiration.”