GPFEI Framework

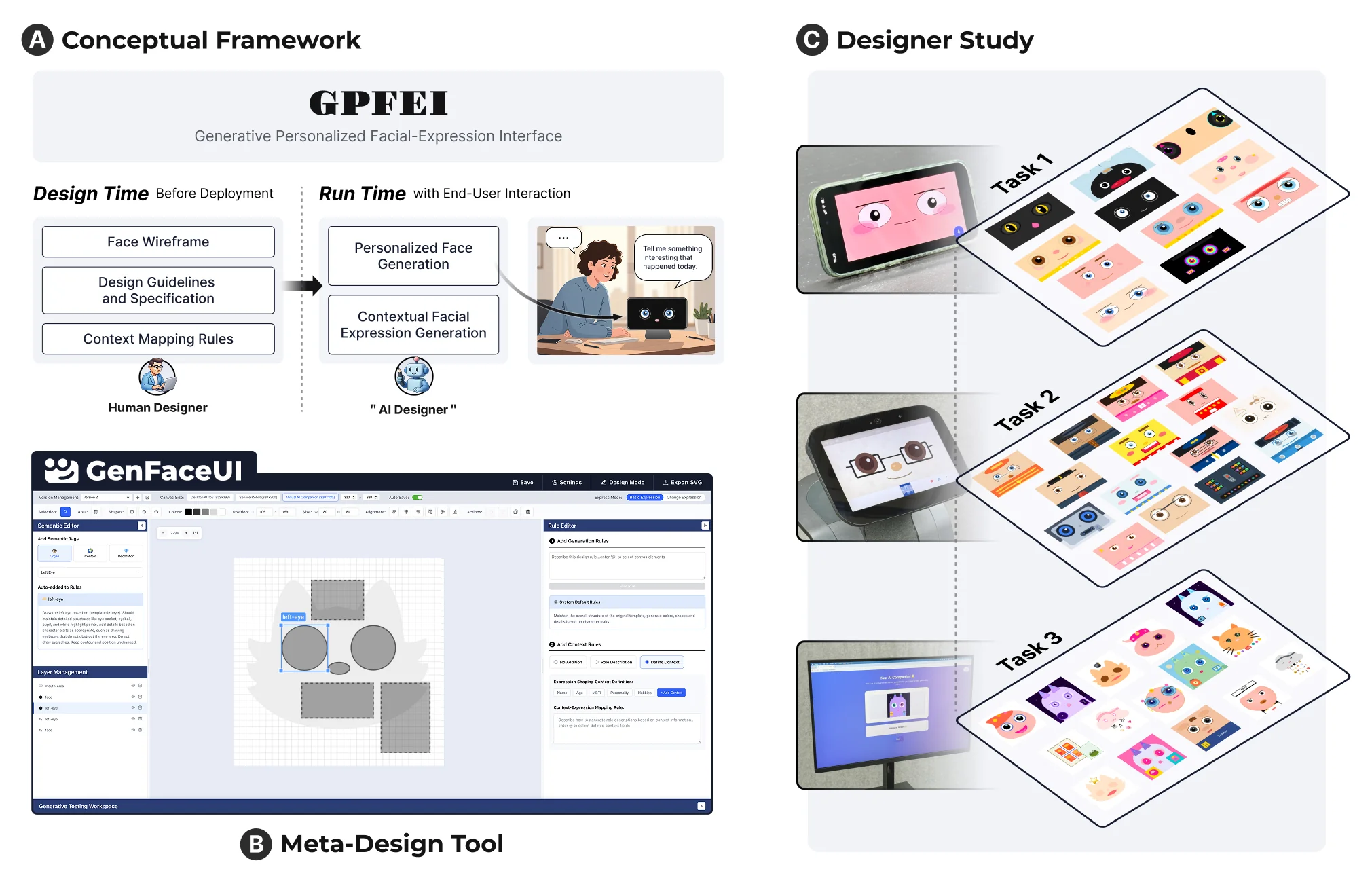

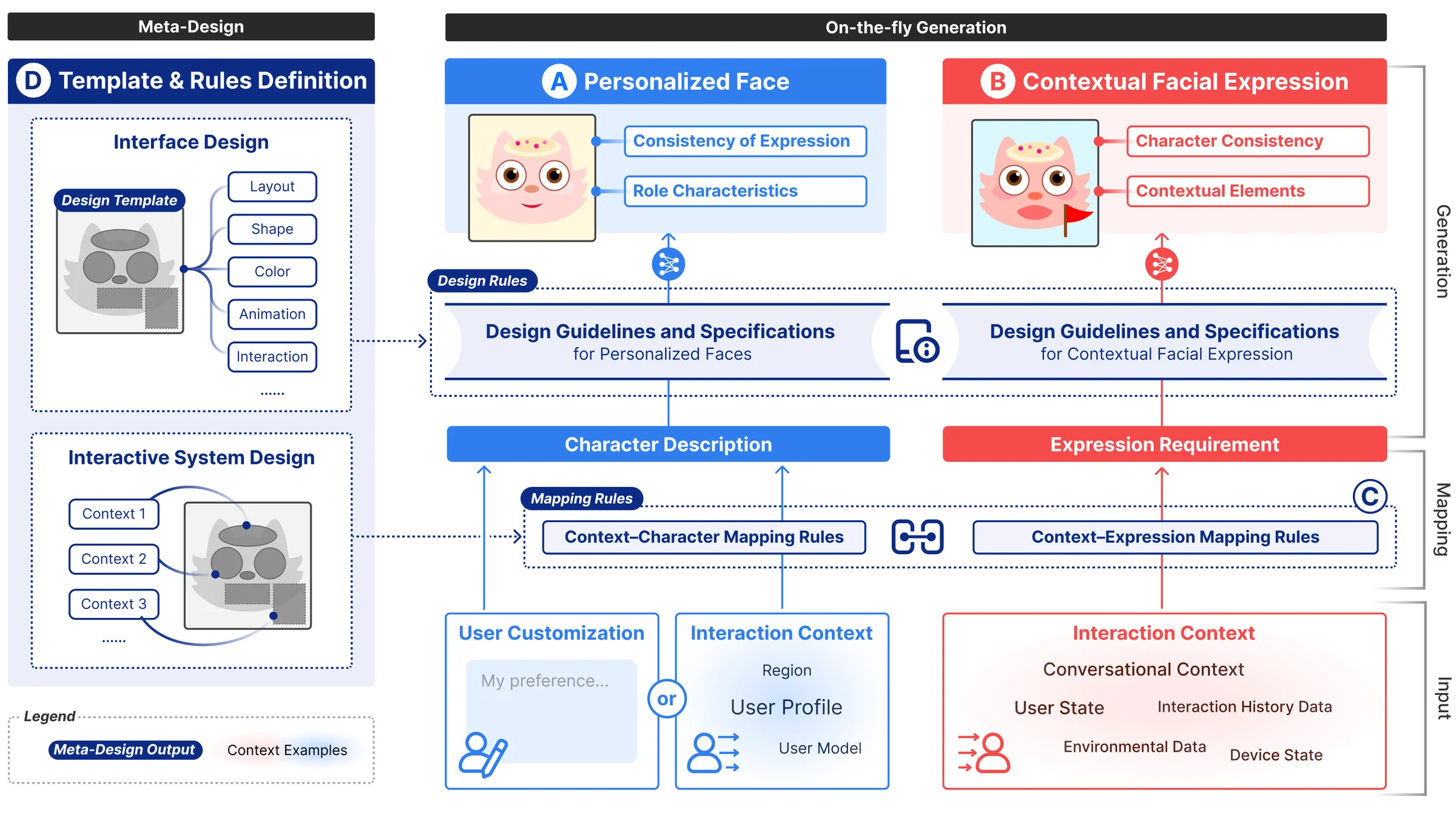

Generative facial expression interfaces introduce a different design problem from traditional asset-authored expression systems. Instead of fully specifying every facial state before deployment, designers need to define the conditions, constraints, and visual vocabulary that will guide generation at run time. To address this, we proposed the Generative Personalized Facial Expression Interface (GPFEI) framework from a meta-design perspective.

GPFEI structures the design space around three core elements: rule-bounded generative spaces, character identity, and context-expression mapping. At design time, designers define facial elements, layouts, colors, semantic tags, and mapping rules that constrain the space of possible outputs. At run time, the AI system interprets interaction context and generates facial expressions that remain aligned with those authored constraints. This reframes the designer’s role from manually authoring every expression asset to designing the rules and structures through which the system can evolve expressively over time.

GenFaceUI System

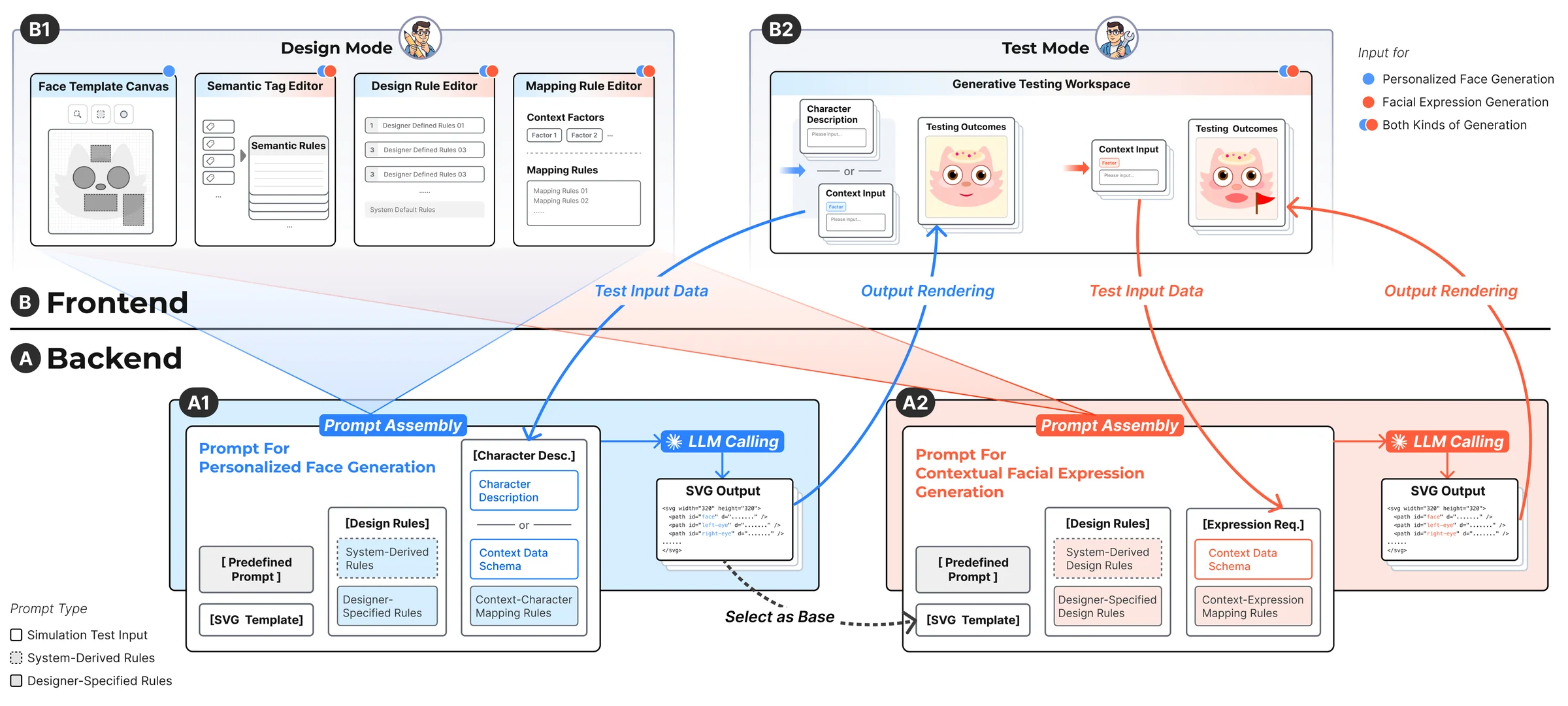

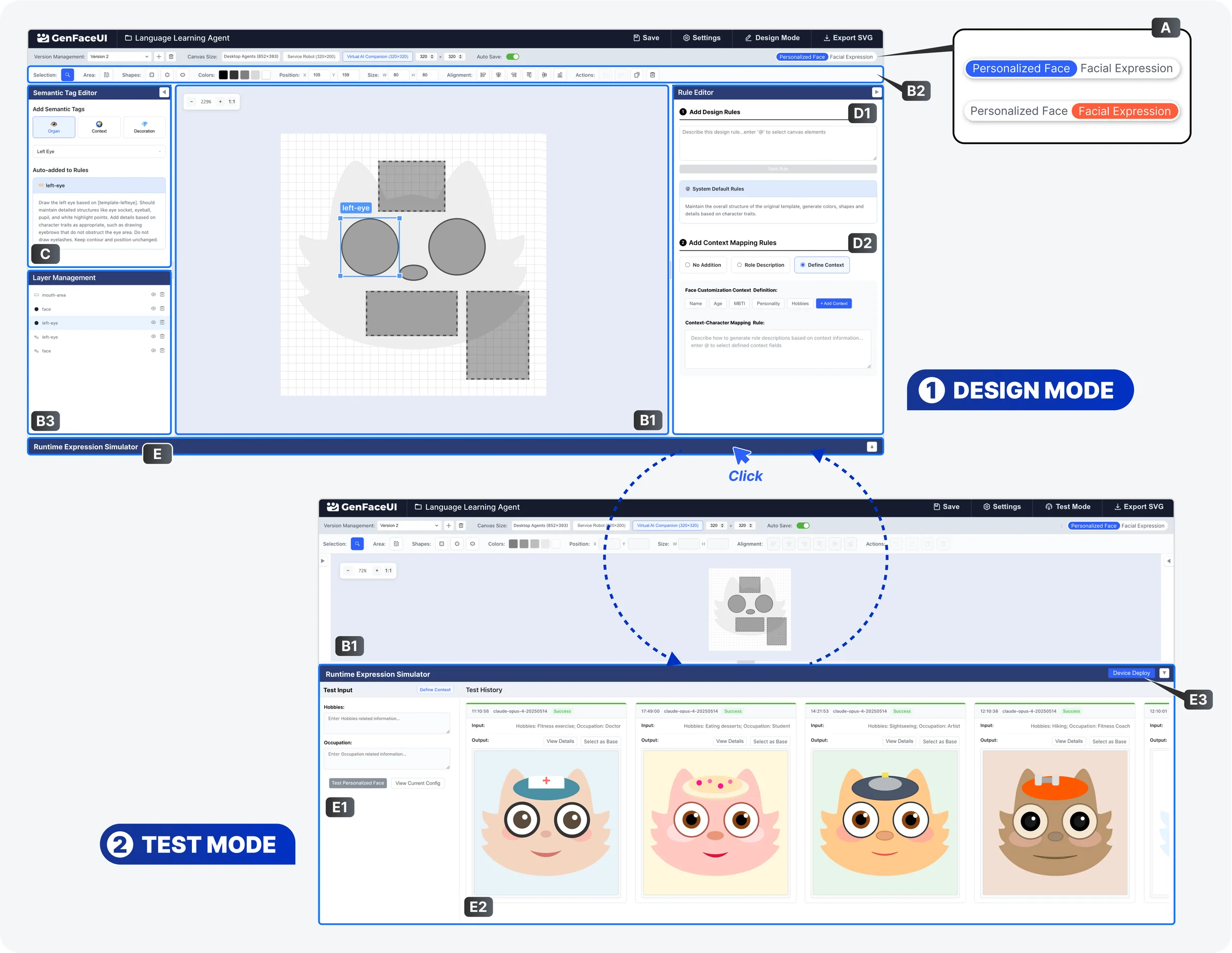

To operationalize the framework, we developed GenFaceUI, a proof-of-concept meta-design tool for generative personalized facial expression interfaces. The system supports the full workflow of facial expression interface design: creating facial templates, assigning semantic tags to visual elements, authoring context-expression rules, and testing generation outcomes with the model in the loop. Rather than treating generation as an opaque backend process, GenFaceUI makes the structure of the design space visible and editable to designers.

The system combines a design canvas, semantic element management, rule authoring, and iterative preview into one environment. Designers can compose faces from modular visual elements, define how expressions should change across contexts, and preserve character consistency by explicitly constraining what can or cannot vary. This makes the system suitable not only for expressive chatbot faces, but also for role-specific service agents and more personalized AI companions.

Designer Study

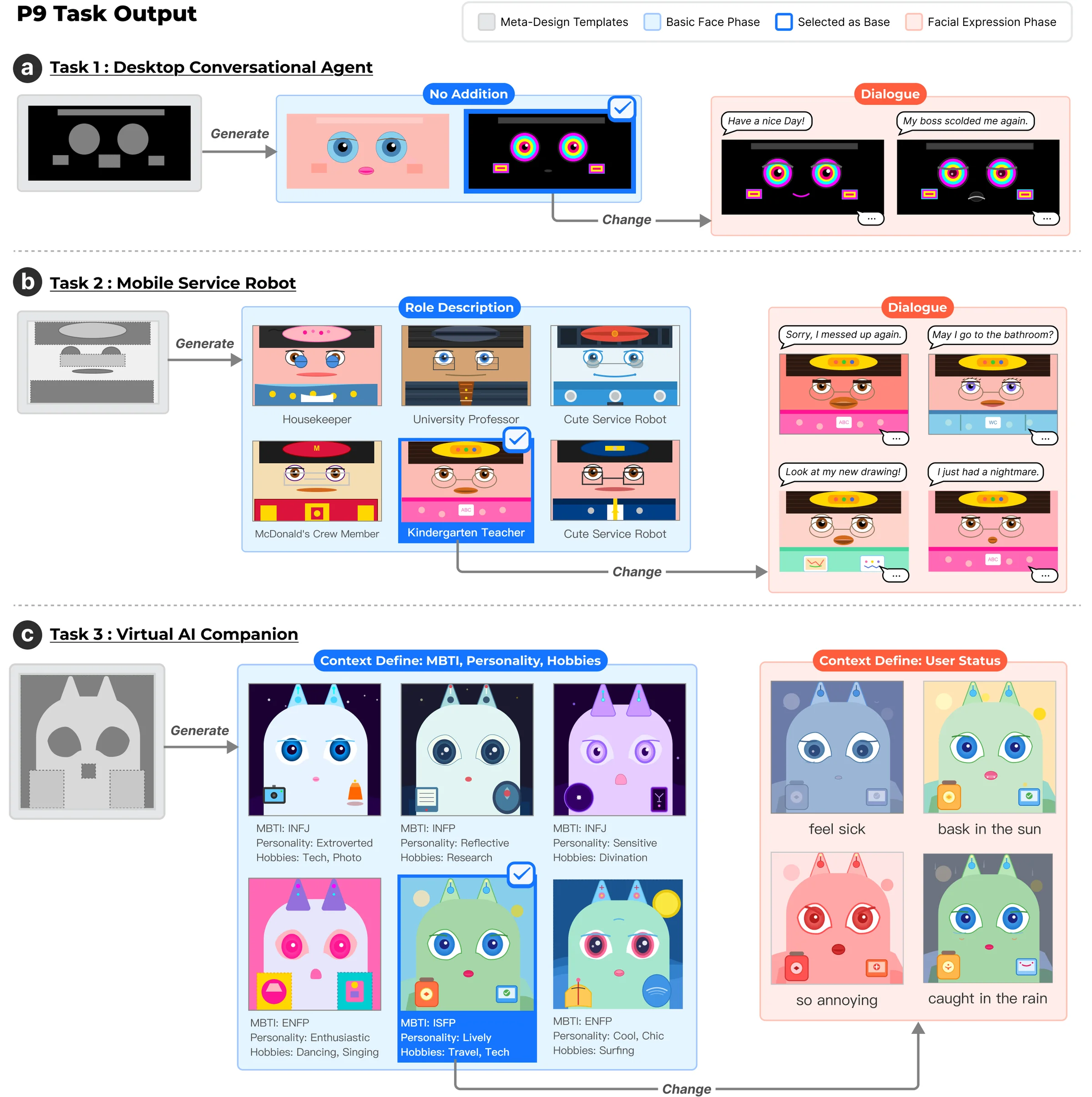

We evaluated GenFaceUI through a qualitative study with 12 designers using three representative tasks: designing a basic chatbot face, customizing a service robot with role-specific visual identities, and creating a personalized AI companion. These tasks were chosen to cover different levels of complexity and different forms of character adaptation, allowing us to examine how designers engaged with meta-design practices in realistic scenarios.

The study showed that designers perceived clear gains in controllability and consistency when working with rule-based generative expressions, while also surfacing important limitations. Participants needed more structured visual mechanisms for understanding the design space and lighter-weight explanations of how system outputs were produced. These findings suggest that future generative facial expression tools should support not only flexible generation, but also stronger interpretability and designer-facing scaffolding.